Manifesto

Natural Language Modeling

Sixty-five million years ago, the most complex organisms on Earth were destroyed — not by something smarter, but by something that needed less.

The creatures that inherited the planet were small, efficient, and built for a changed world. We are in a similar moment with artificial intelligence. The dominant paradigm — large language models trained on billions of dollars of compute, running in data centers that consume the electricity of small nations — is optimized for conditions of abundance. Those conditions are ending.

The question is not whether the correction is coming. The question is whether the intelligence we are building is designed for the world after it.

Natural Language Modeling is that design. It begins with a redefinition: language is not human speech. Language is any signal that carries meaning — and the oldest languages on Earth are chemical, physical, and environmental. A pheromone trail. A pressure drop. A shift in light. These signals have been governing adaptive behavior for 3.8 billion years, long before the first word was spoken and long before the first transformer was trained.

NLM encodes this ancient intelligence into commodity silicon. Two working proof systems demonstrate what becomes possible.

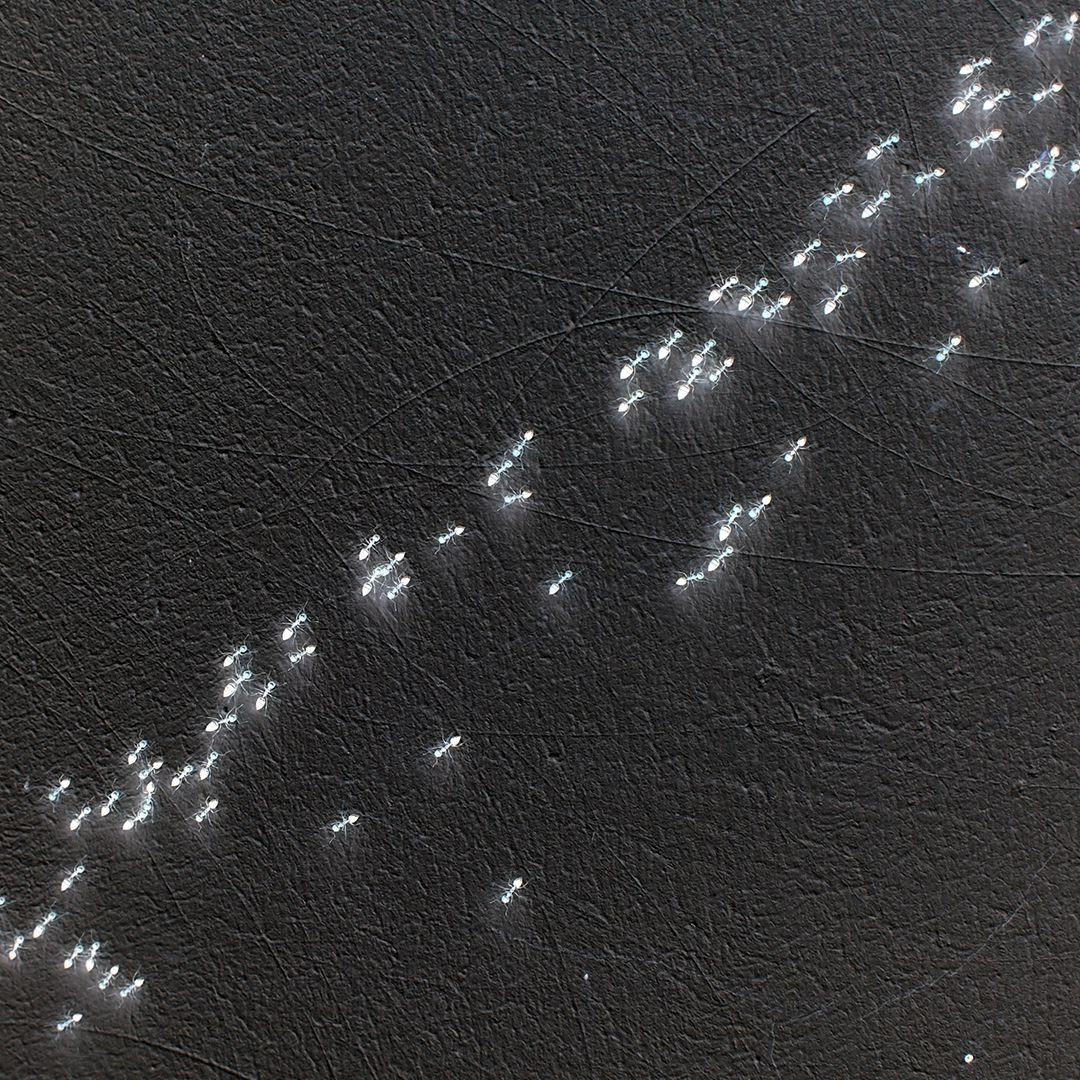

SlimeHive

A swarm of robots, each under $40, that navigates and coordinates using the same mechanism a single-celled organism used to independently replicate Tokyo's rail network.

Ceiba

A solar-powered infrastructure node, under $250, that produces electricity, clean water, light, and mesh connectivity for communities that cannot wait for the grid.

Neither contains a model. Neither requires the cloud. Both work now, in the field, built by one person in Maryland from parts available in any electronics market on any continent.

This is not a critique of AI. It is a correction to its definition. Intelligence is not computation. It is emergence — and emergence belongs to everyone.